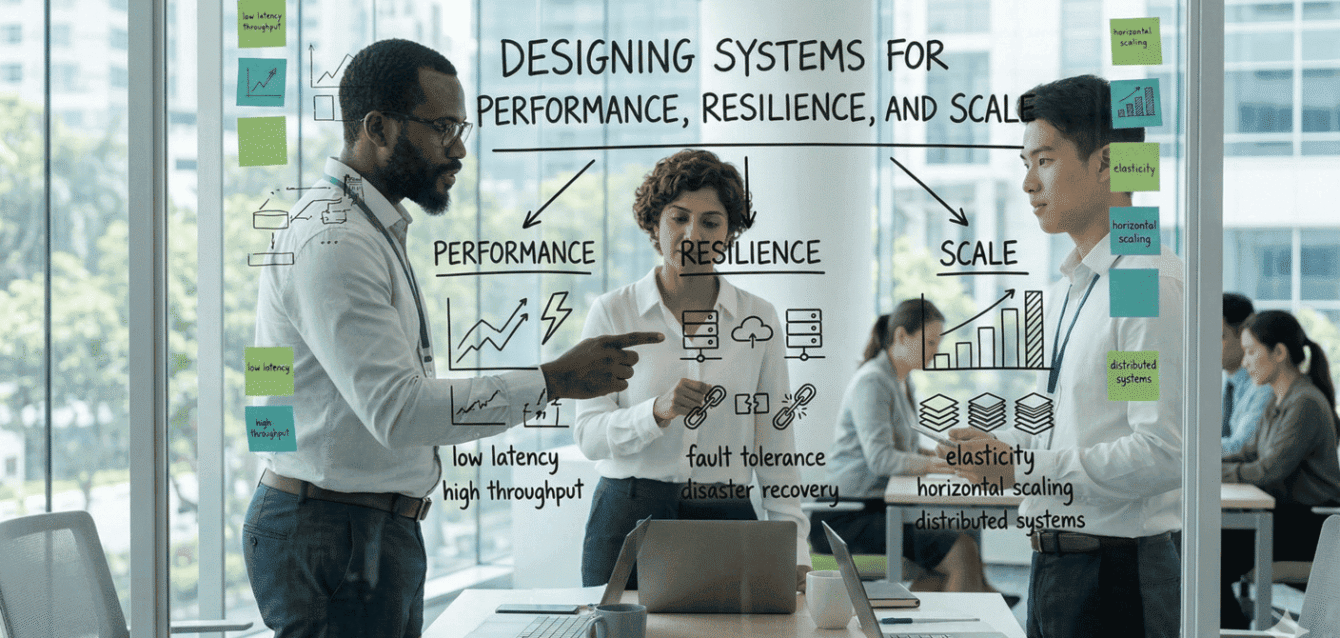

In today’s digital-first landscape, building systems is no longer just about delivering functionality—it’s about engineering platforms that can evolve, adapt, and sustain growth under unpredictable conditions. Performance, resilience, and scalability are no longer technical considerations alone; they are fundamental to business continuity and long-term success.

Organizations that approach system design with an architecture-first mindset are better positioned to handle increasing demand, reduce operational risk, and deliver consistent user experiences—even under stress. The real challenge lies not in building systems that work today, but in designing systems that continue to perform tomorrow.

Designing for Scale: Enabling Growth Without Friction

Scalability is not simply about handling more users—it’s about doing so without compromising performance or stability. As systems grow, the underlying architecture must allow them to expand seamlessly.

Modern systems move away from rigid, monolithic designs toward distributed architectures where scaling becomes more granular and controlled. Instead of increasing the capacity of a single system, organizations adopt horizontal scaling strategies—adding more instances to distribute load dynamically. This approach offers both flexibility and cost efficiency, especially in cloud-native environments.

Equally important is the shift toward modular architectures such as microservices. By breaking down applications into smaller, independent services, organizations gain the ability to scale specific components based on demand rather than the entire system. When combined with stateless design principles, this enables true elasticity—where any service instance can handle any request without dependency on prior interactions.

As data volumes grow, scalability challenges extend to the data layer as well. Techniques like database partitioning and sharding ensure that data access remains efficient, preventing bottlenecks that could otherwise degrade system performance.

Ultimately, scalable systems are those that are designed to grow deliberately—where capacity, performance, and cost remain balanced as demand evolves.

Optimizing for Performance: Delivering Speed at Scale

Performance is often the most visible measure of system quality. Slow systems impact user experience, reduce engagement, and directly affect business outcomes. However, achieving performance at scale requires more than infrastructure—it demands thoughtful engineering across every layer.

A key principle is distributing workload effectively. Load balancing ensures that no single component becomes a bottleneck, while caching strategies reduce repeated computations and database calls by storing frequently accessed data closer to the user. This significantly improves response times and reduces backend strain.

Modern systems also embrace asynchronous processing to handle high volumes of concurrent activity. Instead of processing every request in real time, non-critical or long-running tasks are offloaded to background processes. This ensures that core user interactions remain fast and responsive, even during peak demand.

Performance optimization is further strengthened through platform engineering practices. By leveraging infrastructure as code, containerization, and orchestration platforms, organizations can standardize environments, automate scaling, and reduce operational inconsistencies. This not only improves system efficiency but also accelerates delivery cycles and reduces time to market.

In essence, performance is not a single optimization—it is the outcome of multiple architectural decisions working together cohesively.

Engineering for Resilience: Building Systems That Withstand Failure

In distributed systems, failure is not a possibility—it is an inevitability. The true measure of a system is not whether it fails, but how it responds when it does.

Resilient systems are designed with the assumption that components will fail. Instead of striving for perfection, they are built to absorb disruptions and recover quickly. This begins with redundancy—ensuring that critical components have backups and failover mechanisms that can take over seamlessly.

Equally important is the concept of graceful degradation. When systems are under stress, non-essential features can be temporarily reduced or disabled to preserve core functionality. This ensures that users continue to experience critical services even during partial outages.

To prevent failures from spreading across the system, patterns such as circuit breakers and controlled retries are implemented. These mechanisms isolate issues, preventing cascading failures that can bring down entire platforms.

Resilience is not achieved through design alone—it must be continuously validated. Practices such as chaos engineering introduce controlled failures into the system to test its behavior under real-world conditions. Combined with continuous testing across performance, security, and functionality, this approach ensures that systems remain robust as they evolve.

Organizations that invest in resilience are not just reducing downtime—they are building trust, reliability, and operational confidence into their digital platforms.

Conclusion: From Systems to Strategic Platforms

Designing for performance, resilience, and scale is no longer a technical exercise—it is a strategic capability. Systems built with these principles are better equipped to handle uncertainty, support growth, and adapt to changing business needs.

By embracing modular architectures, cloud-native scalability, asynchronous processing, and proactive resilience strategies, organizations can move beyond reactive problem-solving toward proactive system design.

The result is not just a system that works—but a platform that evolves, scales, and sustains business momentum.